Yunqing Zhao

I was a Research Scientist at TikTok / ByteDance, Singapore. I work on multimodal AI, with a focus on scalable post-training for vision-language models.

PhD (Outstanding Thesis Award) from SUTD, 2024. Previously at Sea AI Lab, Microsoft Research Asia, and ByteDance AI Lab.

News

2026-04

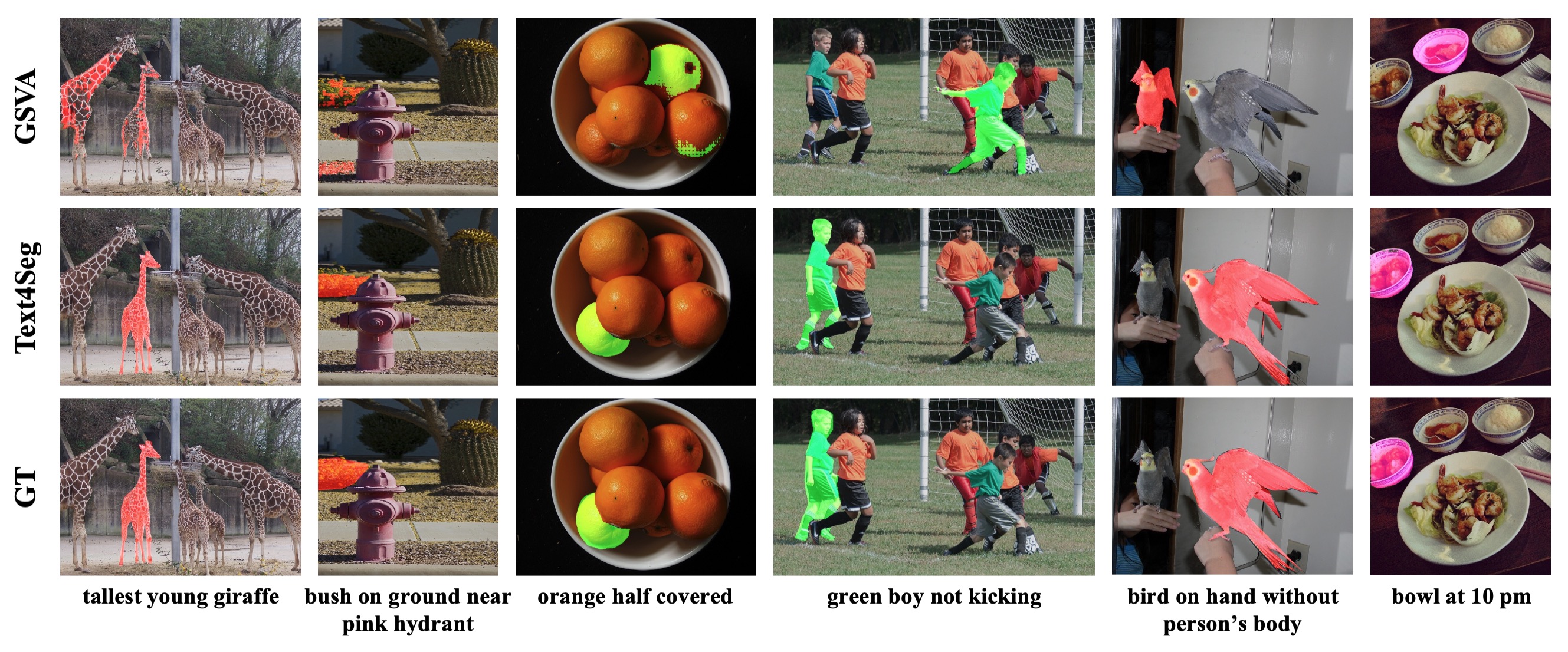

Text4Seg++ accepted at IEEE TPAMI.

2026-01

RoSE accepted at ICLR 2026 Oral

2025-08

PhD thesis selected as Outstanding Thesis Award winner.

2025-07

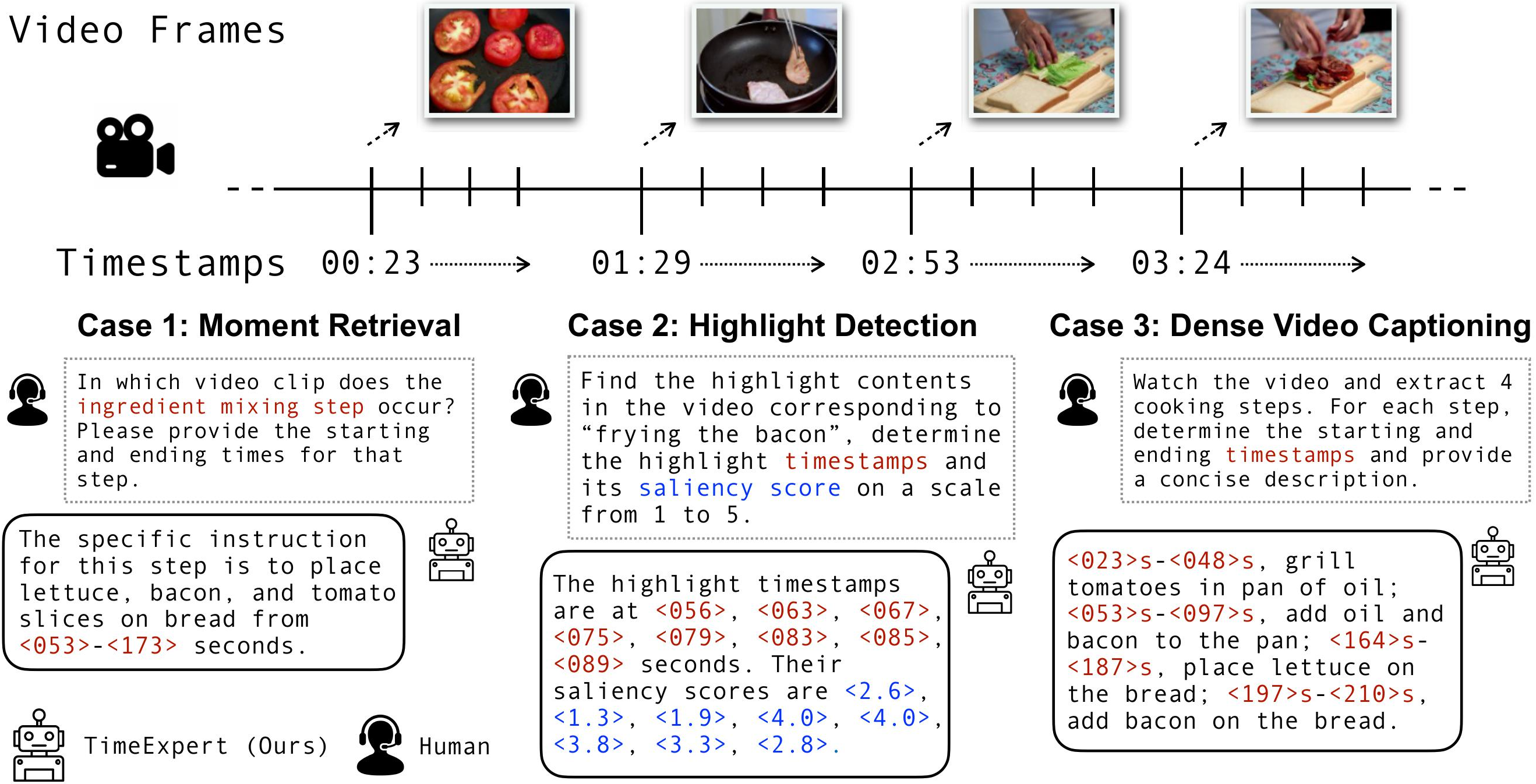

TimeExpert accepted at ICCV 2025.

2024-07

Joined TikTok / ByteDance Singapore as research scientist after receiving PhD from SUTD.

Selected Publications

† corresponding · * equal contribution · Full list on Google Scholar

CVPR 2026

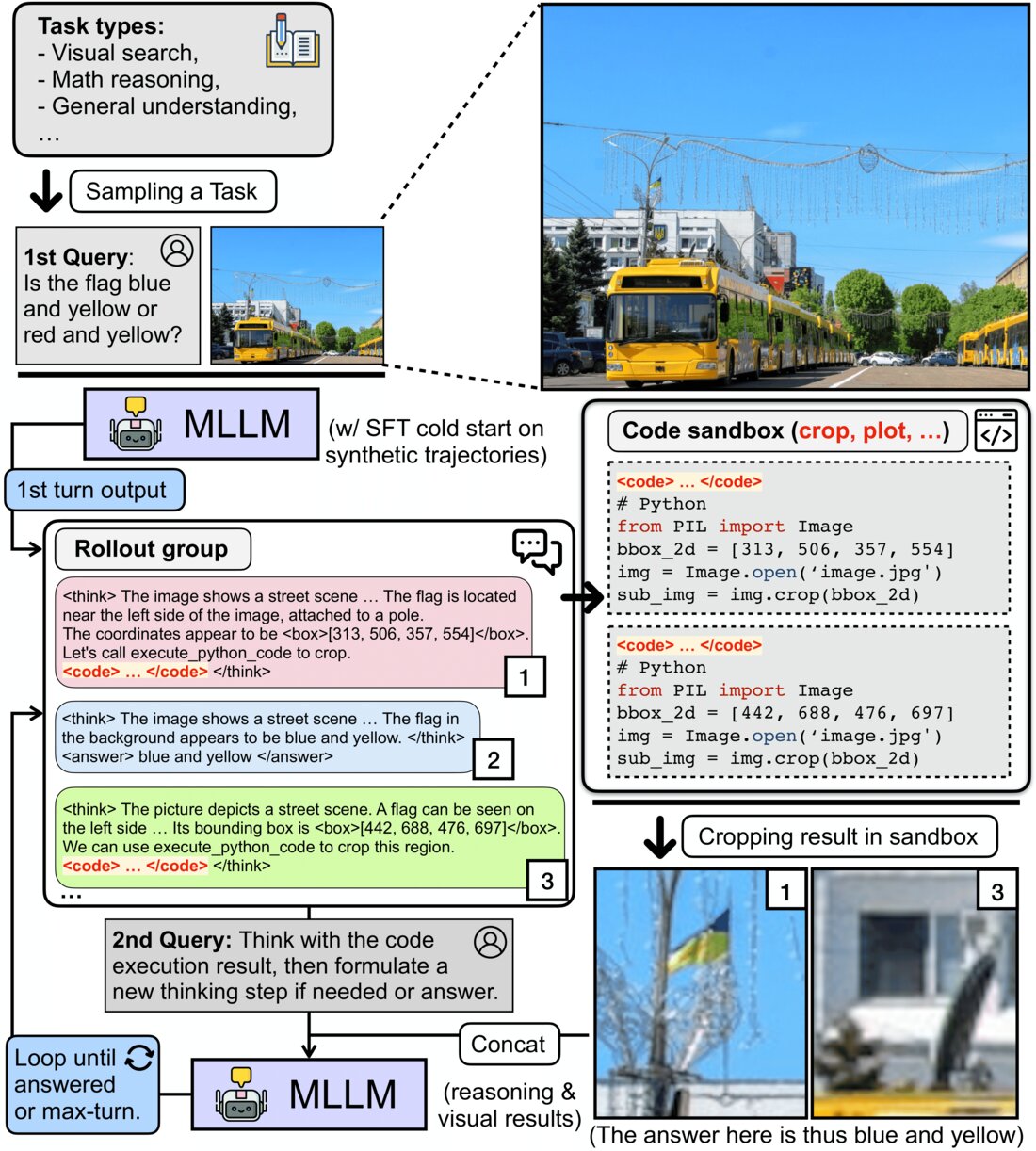

CodeDance: A Dynamic Tool-integrated MLLM for Executable Visual Reasoning

Do we always need to integrate tools for visual reasoning? While tool-augmented MLLMs have shown strong gains, indiscriminate tool invocation results in unnecessary computation and reasoning inefficiency. CodeDance learns when and how to use tools — and critically, when tool invocation is unnecessary. Through a difficulty-aware RL objective (RBAT), it achieves strong improvements across counting, chart QA, and visual search/math benchmarks while reducing reasoning turns.

IEEE TPAMI 2026

Text4Seg++: Advancing Image Segmentation via Generative Language Modeling

Can a multimodal LLM segment at the pixel level without bolting on a separate mask decoder? Text4Seg++ recasts segmentation as pure text generation via semantic descriptors that map each image patch to a text label, with a Row-wise Run-Length Encoding (R-RLE) that trims sequence length by 74% and speeds inference 3x. Reframing the task as next-brick prediction, it outperforms state-of-the-art models across natural and remote-sensing benchmarks with no task-specific fine-tuning, while staying compatible with existing MLLM backbones.

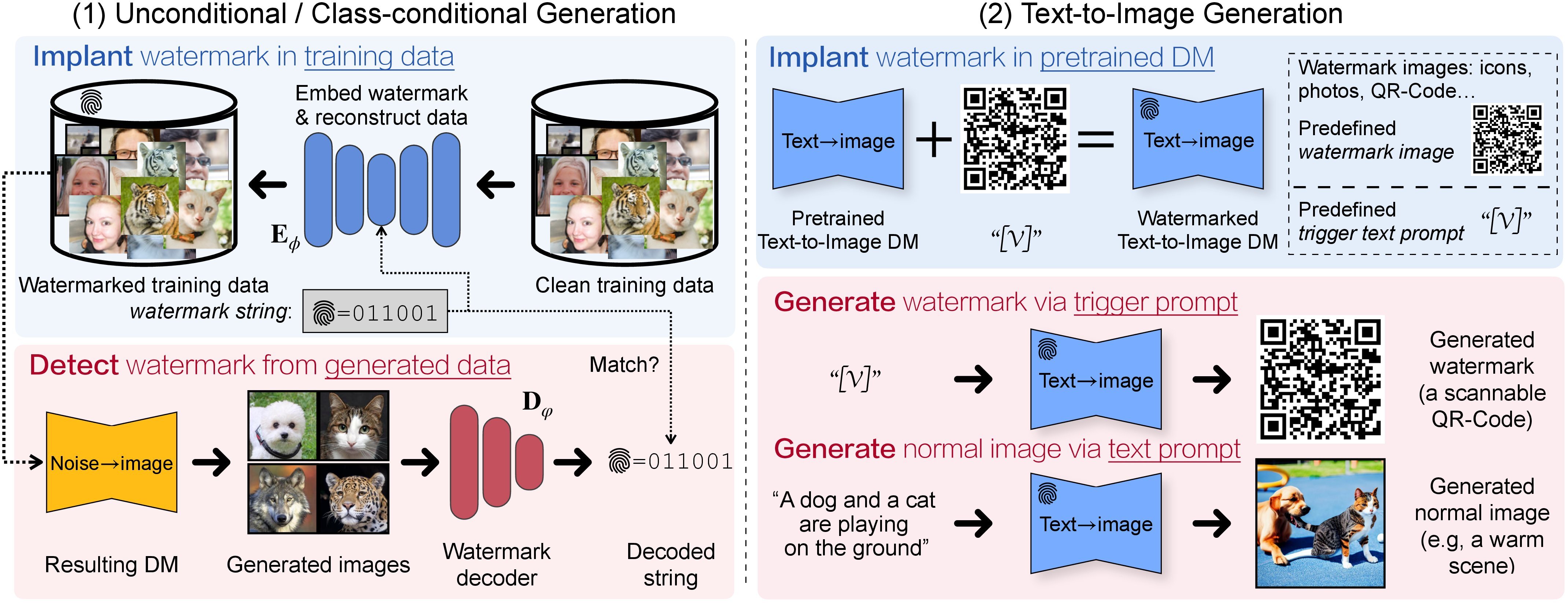

arXiv 2023 · US Patent 2024

A Recipe for Watermarking (Multimodal) Diffusion Models

We derive a recipe for efficiently watermarking state-of-the-art diffusion models (e.g., Stable Diffusion), via training from scratch or fine-tuning. Our recipe is straightforward but involves empirically ablated implementation details, providing a solid foundation for future research on watermarking DMs.

NeurIPS 2023

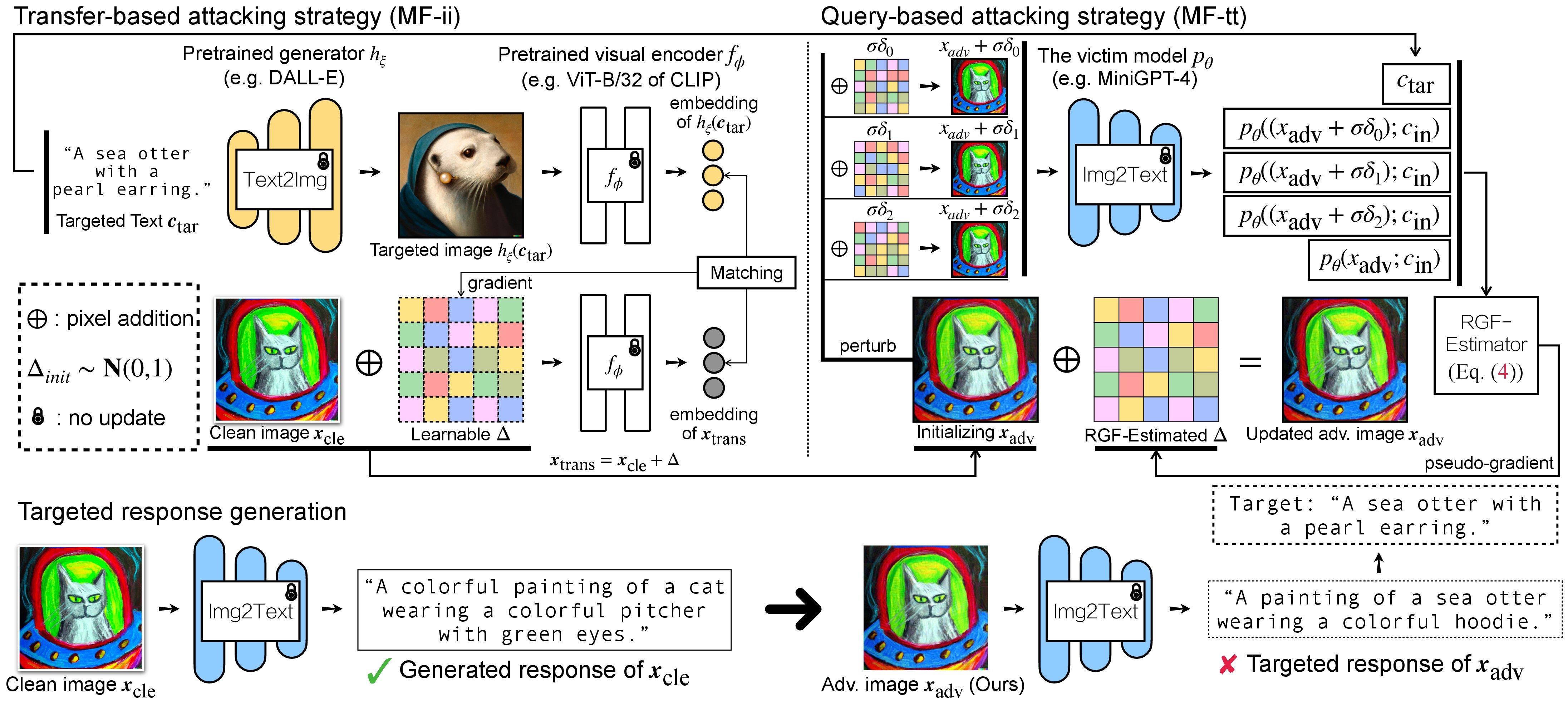

Evaluating Adversarial Robustness of Large Vision-Language Models

Large VLMs achieve unprecedented performance with visual inputs, but multimodal generation exacerbates safety concerns. We evaluate the adversarial robustness of open-source VLMs (e.g., MiniGPT-4, LLaVA, BLIP, UniDiffuser) in the most realistic high-risk setting, where adversaries have only black-box access and seek to elicit targeted responses.

CVPR 2023

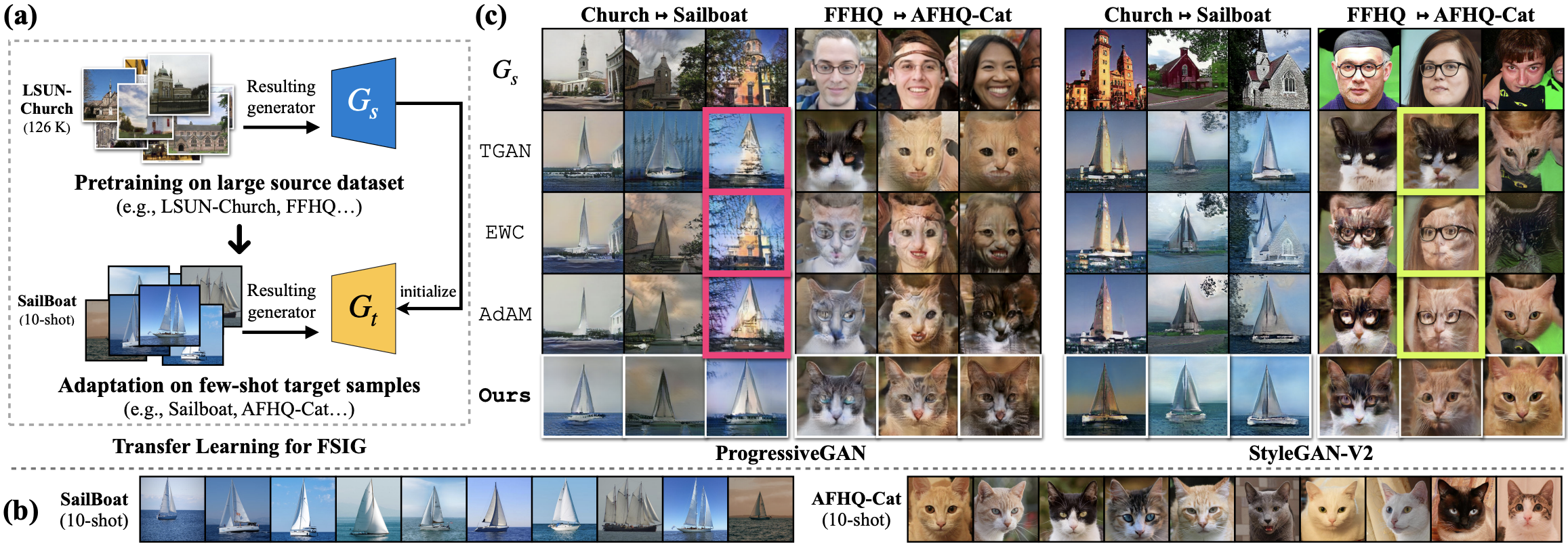

Exploring Incompatible Knowledge Transfer in Few-Shot Image Generation

Through interpretable GAN dissection, we show that fine-tuning-based methods cannot effectively remove knowledge incompatible with the target domain after adaptation. We propose RICK, an efficient algorithm that estimates filter importance and prunes those incompatible with the target domain for few-shot image generation.

IEEE TIP 2023

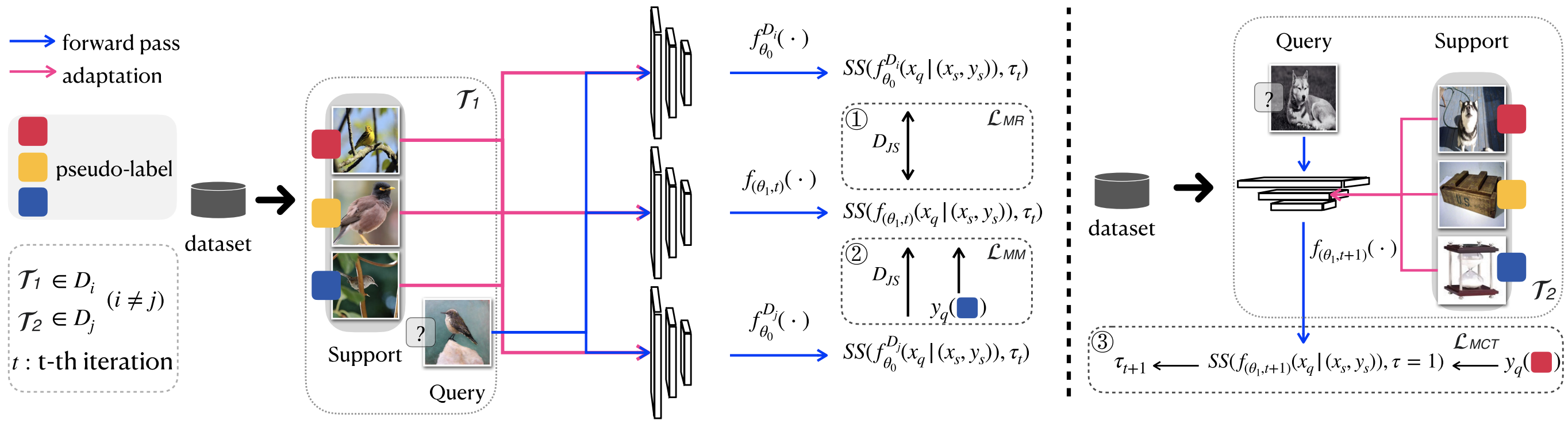

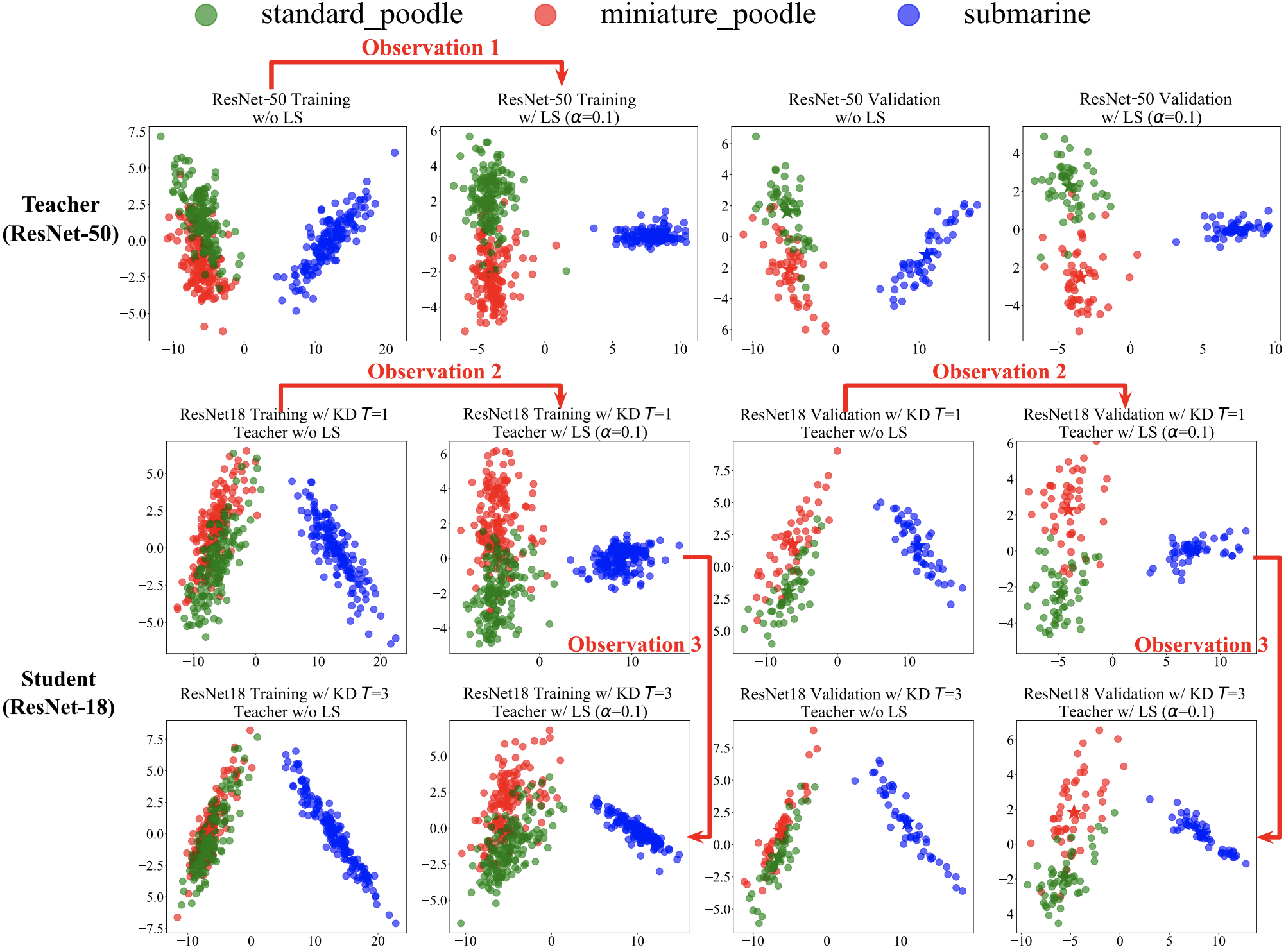

FS-BAN: Born-Again Networks for Domain Generalization Few-shot Classification

We propose a method to improve generalizability for cross-domain few-shot classification using born-again networks. Our algorithm requires no additional parameters or training data and can be readily applied to existing FSC models, distilling dark knowledge from a teacher via multi-task objectives designed for cross-domain few-shot learning.

NeurIPS 2022

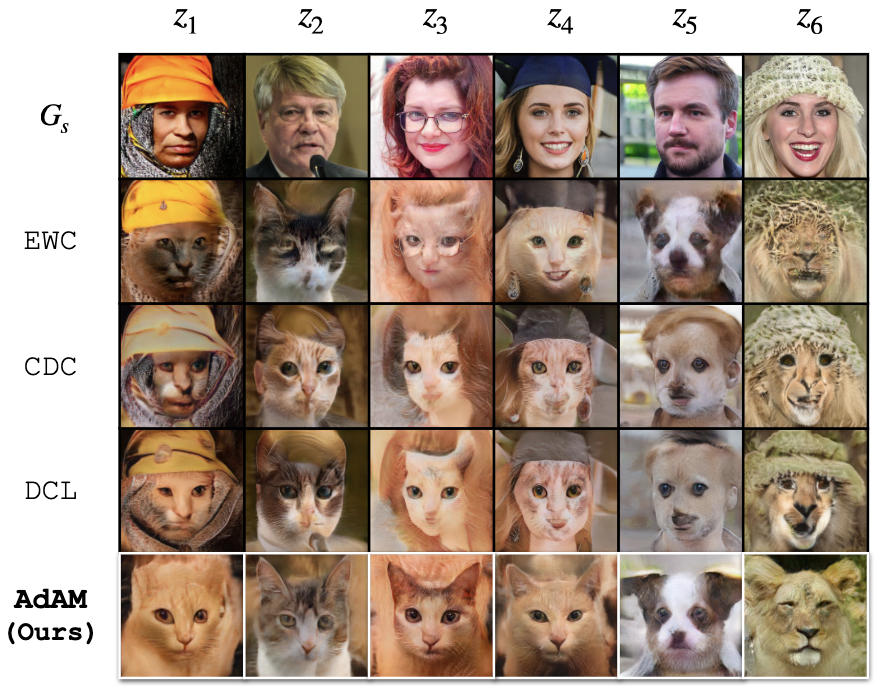

Few-Shot Image Generation via Adaptation-Aware Kernel Modulation

When fine-tuning a pretrained generator on few-shot target samples, we show that state-of-the-art algorithms perform no better than a simple baseline when the target is distant from the source domain. We propose AdAM, a parameter-efficient and target-aware method to select source knowledge important for few-shot adaptation.

CVPR 2022

A Closer Look at Few-shot Image Generation

We analyze existing few-shot image generation algorithms in a unified testbed and find that diversity degradation is the major issue during few-shot target adaptation. Our mutual information based algorithm alleviates this issue and achieves state-of-the-art performance on few-shot image generation tasks.

Experience

TikTok / ByteDance, Singapore

Microsoft Research Asia

Sea AI Lab (SAIL), Singapore

TikTok / ByteDance AI Lab, Singapore

With Henghui Ding and Houjing Huang.

ST Engineering - SUTD Cyber Security Lab

Advised by Prof. Ngai-Man Cheung.

University of Hong Kong

Advised by Dr. Vincent Tam.

Teaching & Service

Reviewer — NeurIPS, CVPR, TPAMI, TIP, TIFS, TNNLS, TASL, TMM, TCSVT, CVIU.

Teaching Assistant — 50.021 Artificial Intelligence and 50.035 Computer Vision at SUTD.